骨骼点检测

适用场景

人体骨骼关键点检测,主要检测人体的一些关键点,通过关键点描述人体骨骼信息。具体应用主要集中在智能视频监控,病人监护系统,人机交互,虚拟现实,人体动画,智能家居,智能安防,运动员辅助训练等等。

支持17个关键点的识别,具体为鼻子,左右眼,左右耳,左右肩,左右肘、左右手腕、左右髋、左右膝、左右脚踝。

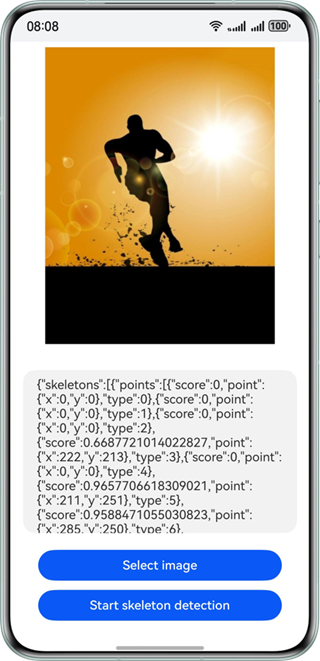

效果如下图所示:

约束与限制

该能力当前不支持模拟器。

| AI能力 | 约束 |

|---|---|

| 骨骼点检测 | - 输入图像具有合适成像的质量(建议720p以上),100px<高度<10000px,100px<宽度<10000px,高宽比例建议5:1以下(高度小于宽度的5倍),接近手机屏幕高宽比例为宜。 |

开发步骤

-

在使用骨骼点检测时,将实现骨骼点检测相关的类添加至工程。

import { image } from '@kit.ImageKit';import { hilog } from '@kit.PerformanceAnalysisKit';import { BusinessError } from '@kit.BasicServicesKit';import { fileIo } from '@kit.CoreFileKit';import { skeletonDetection, visionBase } from '@kit.CoreVisionKit';import { photoAccessHelper } from '@kit.MediaLibraryKit'; -

简单配置页面的布局,并在Button组件添加点击事件,拉起图库,选择图片。

Button('选择图片').type(ButtonType.Capsule).fontColor(Color.White).alignSelf(ItemAlign.Center).width('80%').margin(10).onClick(() => {// 拉起图库,获取图片资源void this.selectImage();}) -

通过图库获取图片资源,将图片转换为PixelMap。

private async selectImage() {let uri = await this.openPhoto()if (uri === undefined) {hilog.error(0x0000, 'skeletonDetectSample', "Failed to define uri.");}this.loadImage(uri)}private async openPhoto(): Promise<string> {return new Promise<string>((resolve, reject) => {let photoPicker: photoAccessHelper.PhotoViewPicker = new photoAccessHelper.PhotoViewPicker();photoPicker.select({MIMEType: photoAccessHelper.PhotoViewMIMETypes.IMAGE_TYPE, maxSelectNumber: 1}).then(res => {resolve(res.photoUris[0])}).catch((err: BusinessError) => {hilog.error(0x0000, 'skeletonDetectSample', `Failed to get photo image uri. code: ${err.code}, message: ${err.message}`);reject('')})})}private loadImage(name: string) {setTimeout(async () => {let fileSource = await fileIo.open(name, fileIo.OpenMode.READ_ONLY);this.imageSource = image.createImageSource(fileSource.fd);this.chooseImage = await this.imageSource.createPixelMap();}, 100)} -

实例化Request对象,并传入待检测图片的PixelMap,实现骨骼点检测功能。

// 调用骨骼点识别接口let request: visionBase.Request = {inputData: { pixelMap: this.chooseImage }};let data: skeletonDetection.SkeletonDetectionResponse = await (await skeletonDetection.SkeletonDetector.create()).process(request); -

(可选)如果需要将结果展示在界面上,可以用下列代码。

let data: skeletonDetection.SkeletonDetectionResponse = await (await skeletonDetection.SkeletonDetector.create()).process(request);let poseJson = JSON.stringify(data);hilog.info(0x0000, 'skeletonDetectSample', `Succeeded in skeleton detection: ${poseJson}`);this.dataValues = poseJson;

开发实例

Index.ets

import { image } from '@kit.ImageKit';

import { hilog } from '@kit.PerformanceAnalysisKit';

import { BusinessError } from '@kit.BasicServicesKit';

import { fileIo } from '@kit.CoreFileKit';

import { skeletonDetection, visionBase } from '@kit.CoreVisionKit';

import { photoAccessHelper } from '@kit.MediaLibraryKit';

@Entry

@Component

struct Index {

private imageSource: image.ImageSource | undefined = undefined;

@State chooseImage: PixelMap | undefined = undefined

@State dataValues: string = ''

build() {

Column() {

Image(this.chooseImage)

.objectFit(ImageFit.Fill)

.height('60%')

Text(this.dataValues)

.copyOption(CopyOptions.LocalDevice)

.height('15%')

.margin(10)

.width('60%')

Button('选择图片')

.type(ButtonType.Capsule)

.fontColor(Color.White)

.alignSelf(ItemAlign.Center)

.width('80%')

.margin(10)

.onClick(() => {

// 拉起图库

void this.selectImage()

})

Button('开始骨骼点识别')

.type(ButtonType.Capsule)

.fontColor(Color.White)

.alignSelf(ItemAlign.Center)

.width('80%')

.margin(10)

.onClick(() => {

// 调用封装的异步处理函数

void this.handleSkeletonDetection();

})

}

.width('100%')

.height('100%')

.justifyContent(FlexAlign.Center)

}

// 封装骨骼点识别的异步逻辑

private async handleSkeletonDetection() {

if(!this.chooseImage) {

hilog.error(0x0000, 'skeletonDetectSample', `Failed to choose image.`);

return;

}

// 调用骨骼点识别接口

let request: visionBase.Request = {

inputData: { pixelMap: this.chooseImage }

};

try {

let data: skeletonDetection.SkeletonDetectionResponse =

await (await skeletonDetection.SkeletonDetector.create()).process(request);

let poseJson = JSON.stringify(data);

hilog.info(0x0000, 'skeletonDetectSample', `Succeeded in skeleton detection: ${poseJson}`);

this.dataValues = poseJson;

} catch (error) {

hilog.error(0x0000, 'skeletonDetectSample', `Failed to get result. Error: ${error}`);

}

}

private async selectImage() {

let uri = await this.openPhoto()

if (uri === undefined) {

hilog.error(0x0000, 'skeletonDetectSample', "Failed to define uri.");

}

this.loadImage(uri)

}

private async openPhoto(): Promise<string> {

return new Promise<string>((resolve, reject) => {

let photoPicker: photoAccessHelper.PhotoViewPicker = new photoAccessHelper.PhotoViewPicker();

photoPicker.select({

MIMEType: photoAccessHelper.PhotoViewMIMETypes.IMAGE_TYPE, maxSelectNumber: 1

}).then(res => {

resolve(res.photoUris[0])

}).catch((err: BusinessError) => {

hilog.error(0x0000, 'skeletonDetectSample', `Failed to get photo image uri. code: ${err.code}, message: ${err.message}`);

reject('')

})

})

}

private loadImage(name: string) {

setTimeout(async () => {

try {

let fileSource = await fileIo.open(name, fileIo.OpenMode.READ_ONLY);

this.imageSource = image.createImageSource(fileSource.fd);

this.chooseImage = await this.imageSource.createPixelMap();

await fileIo.close(fileSource);

} catch (error) {

hilog.error(0x0000, 'skeletonDetectSample', `Failed to open file. Error: ${error}`);

}

}, 100)

}

}